Eve, here. Most people accept that algorithmic curation works to manipulate political and even broader cultural views. Some might argue that otherwise Facebook wouldn’t have a business. But how does that algorithmic effect work?For example, a friend of mine, who is generally intelligent, analytical, and very well-informed, spends his entire day racking his brain with Fox on in the background while he works. It’s striking how he swallows right-wing tropes like the United States suffering from a major crime wave. So one might conclude (admittedly from anecdote) that immersion works.

In this article, we discover a similar, albeit more complex, mechanism. So, while exposure to algorithm-driven newsfeeds on right-wing Twitter does lead to movement toward more right-wing positions, it’s not just the amount of time spent consuming it. Being on the receiving end of algorithmically biased content forces customers to follow ideologically aligned feeds and sites, thereby perpetuating information bias post-indoctrination.

By Germain Gauthier, Assistant Professor, Bocconi University, Roland Hodler, Professor, St. Gallen University of Economics, Philine Widmer, Assistant Professor, Paris School of Economics, and Professor Ekaterina Zhuravskaya, Paris School of Economics. Originally published on VoxEU

Algorithms are selective about what social media users see, raising concerns that they can distort attitudes and influence social and political outcomes. This column reports on an experiment conducted at X in the United States in 2023. In this experiment, users were randomly assigned to either an algorithmic feed or a time series feed. Switching from a chronological feed to an algorithmic feed significantly shifted political opinion toward the Republican Party, but turning off the algorithm did not have the same effect. This asymmetry arose because algorithms influenced which accounts users chose to follow, leaving a lasting mark on the information environment even after the algorithmic curation was removed.

With the advent of feed algorithms, social media streams are no longer simply chronologically ordered lists of posts from the accounts you follow. Instead, the algorithm curates what users see by inserting posts from accounts they don’t follow and reordering the content. This can push content down in the order, effectively hiding posts from followed accounts. Algorithms curate personalized feeds primarily to maximize user engagement with the platform, but may also be used for other purposes. There is widespread concern that social media algorithms can distort attitudes and influence social and political outcomes (e.g. Pariser 2011, Settle 2018, Sunstein 2018, Persily and Tucker 2020, Rose-Stockwell 2023, Braghieri et al. 2025). These algorithms may promote content that attracts users’ attention, such as extreme and harmful posts. They may also prioritize posts that reinforce users’ existing beliefs and misconceptions, contributing to the creation of a polarized information environment, the so-called filter bubble.

Surprisingly, however, a previous large-scale, rigorous experiment found that political attitudes were unaffected by turning off the feed algorithm (Guess et al. 2023). In particular, research conducted with Meta during the 2020 US election showed that replacing users’ feeds from algorithmically curated ones to chronological ones changed what users saw in their feeds and lowered engagement, but had no measurable impact on political attitudes, partisanship, or polarization.

This finding leaves important unanswered questions. Are the commonly expressed concerns about unjust algorithms? Can exposure to algorithmic feeds influence attitudes that persist even after the algorithms are turned off? Does this mean there is an asymmetry in the effects of switching on and off algorithmic feeds? Do the results generalize to other platforms? In a highly polarized and partisan environment, should we expect some political attitudes to be more malleable and more susceptible to algorithmic influence than others?

experiment

A new paper (Gauthier et al. 2026) presents the results of an experiment conducted on X (formerly Twitter) in the summer of 2023. X provided us with a valuable opportunity to study feed algorithms without platform cooperation. Users can choose between two feeds. One is a chronological feed (the “Following” tab) that displays posts from accounts you follow in chronological order, and the other is an algorithmic feed (the “Me” tab) that sorts content and adds posts from accounts you don’t follow. We recruited approximately 5,000 active X users, randomly assigned them to either an algorithmic feed or a time series feed, and paid them to maintain their assigned feed settings for seven weeks.

Therefore, some participants had to switch their feed settings, while others kept the feed settings they had previously used. This design allowed us to study two different treatments. One option is to turn the algorithm on for users who previously relied on time series feeds, and the other option is to turn the algorithm off for users who previously used the algorithm feed. We measured the impact of this intervention on user engagement, political views, and choice of accounts to follow.

Switch the algorithm if the opinion leans to the right. Turning the switch off had no effect

First, as you might expect, the algorithm maximizes engagement, so users who switched from the chronological feed to the algorithmic feed spent more time on X compared to users who stayed on the chronological feed. Our second finding is new compared to previous literature. Political attitudes were strongly and significantly affected by turning on the algorithm. After exposure to the algorithmic feed, users’ political views shifted in a pro-Republican direction. After watching the algorithmic feed for seven weeks in the experiment, these users were more likely to prioritize policy issues typically emphasized by Republicans, such as inflation, immigration, and crime, over policy issues typically emphasized by Democrats, such as health care and education, compared to those who continued to use the chronological feed.

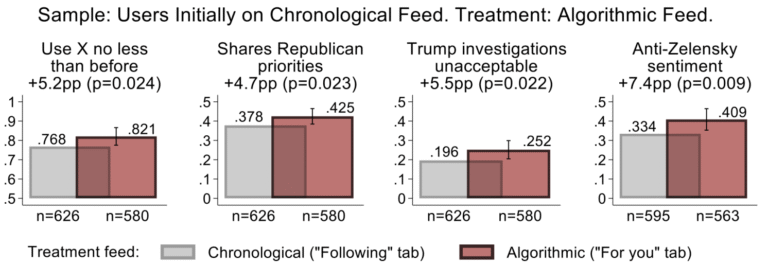

They were also more likely, on average, to believe that a criminal investigation into Donald Trump was unacceptable because it undermined democracy and the rule of law. They were also more likely to take pro-Kremlin positions regarding Russia’s invasion of Ukraine and express negative sentiments toward the Ukrainian leadership and Joe Biden’s support for Ukraine. Some of these effects are illustrated with selected results in panel (a) of Figure 1.

Figure 1 Effect of switching the algorithm on and off on engagement and chosen political attitudes

(a) A user who originally used a time series feed switches to an algorithmic feed.

(b) Users initially used algorithmic feeds, but switched to time series feeds.

Note: Selected attitude results. Panel (a) shows the impact of switching a user from a time series feed to an algorithmic feed. Panel (b) shows the impact of switching a user from an algorithmic feed to a time series feed. Bars indicate average findings (engagement with 95% confidence intervals reported; see Gauthier et al. (2026) for more information.

Source: Gauthier et al. (2026).

These results are driven by effects among users who self-reported as Republicans or Independents in pre-treatment surveys and are consistent with the general finding in the persuasion literature that persuasion is most effective with audiences with positive tendencies (e.g., Adena et al. 2015).

Equally surprising is what we don’t find. First, switching users from algorithmic to time-series feeds had essentially no effect on political attitudes (Figure 1, panel (b)). This is fully consistent with Meta’s study (Guess et al. 2023) and suggests the general validity of the findings in both Meta and our experiments.

Second, turning the algorithm on or off had no effect on self-reported partisanship or polarization. This suggests that algorithms may change views about current policy issues and policy priorities, but not users’ more rigid partisan identities.

How algorithms leave a lasting footprint

At first glance, the asymmetry in the effects of switching algorithms on and off on political attitudes is puzzling. If algorithmic curation pushes opinion in one direction, why doesn’t removing the algorithm reverse the effect? The answer lies in how algorithms shape user behavior.

To understand this mechanism, we analyzed both the content users see in their feeds and the accounts they choose to follow. First, we asked users to run a dedicated Google Chrome extension that downloads feed content in both feed settings. These data provided direct evidence of what the X algorithm facilitated in the summer of 2023. Compared to the chronological feed, the algorithmic feed showed more posts that had already generated high engagement (likes, comments, reposts).

When it came to politics, algorithmic feeds had a significantly higher proportion of political content, within which right-wing content was far more prioritized than left-wing content. There were significantly more posts from political activists (defined as ordinary users who post a lot about politics and who can’t be categorized as media, governments, or organizations) on both the right and left, but fewer posts from traditional news organizations on both the right and left. Although we found significant heterogeneity in the share of right-wing content in the feeds of Republican-leaning and Democratic-leaning users, the share of right-wing content among all political content was significantly higher for both user groups. The content promoted by the algorithm is shown in Figure 2. (The difference in content between a time series feed setting and an algorithmic feed setting is the same whether or not you include user fixed effects.)

Figure 2 What self-declared partisanship drives algorithms

(a) Democratic Party

(b) Republicans and Independents;

Note: Feed content by self-reported partisanship. Average content users see for each feed configuration: Time series feed (gray) and algorithmic feed (red). 95% confidence intervals were reported. For more information, see Gauthier et al. (2026).

Source: Gauthier et al. (2026).

Second, we found that exposure to the algorithm changed the accounts users chose to follow. Users who switch to algorithmic feeds are more likely to follow political activist accounts, especially those of right-wing activists. In contrast, users who switch to chronological feeds don’t see any changes in the accounts they follow. This explains the asymmetry in effects. This means that the algorithm will direct users to new sources, and users will continue to follow those sources even after the algorithm is turned off (i.e., users will not actively unfollow those accounts). Therefore, the influence of these sources persists even when the algorithm feed is turned off.

Implications for policy and platform design

These results have important implications for the debate about the regulation of social media algorithms.

Our results strongly suggest that social media feed algorithms are not politically neutral. Their influence can outlast the algorithms themselves, as these can influence what people believe, influencing users’ online behavior and, more noticeably, the choice of accounts to follow. This requires a serious discussion about regulating feed algorithms.

Furthermore, our findings highlight that, at least in the short term, algorithms can shape political attitudes without increasing self-reported partisan polarization or changing partisan identity. This challenges the general tendency to view political influence as solely polarizing. Subtle shifts in issue priorities and beliefs about current events can have equally significant implications for democratic outcomes. Moreover, as people use these platforms over many years, we cannot rule out the possibility that their influence on such priorities and beliefs accumulates over time, eventually changing their more deeply held political identities as well.

Finally, our study highlights the importance of studying platforms independently and in real-world settings. Systematic monitoring of social media algorithms is necessary because algorithms, content, and user behavior can evolve rapidly and their impact depends on platform-specific design choices and incentives (Aridor et al. 2025).

See original post for reference